Simulations enable AI Managers to proactively understand how configuration changes impact AI Agent behavior. By creating and running test cases, you can verify that updates improve behavior without causing regressions.

Simulations differ from Interactive Testing in that they run test cases as multi-turn conversations in bulk automatically, rather than manually and one at a time.

Use Simulations for systematic validation at scale—including regression testing, compliance audits, and deployment readiness.

Use Interactive Testing for quick checks and qualitative exploration.

When you modify an AI Agent—whether by updating Knowledge, adjusting Actions, or refining Playbooks, predicting the full impact across all support scenarios is difficult. Simulations address this challenge by allowing you to define a set of test cases, simulate end-user inquiries, and evaluate the AI Agent’s simulated responses against the expected outcomes you define.

With Simulations, you can:

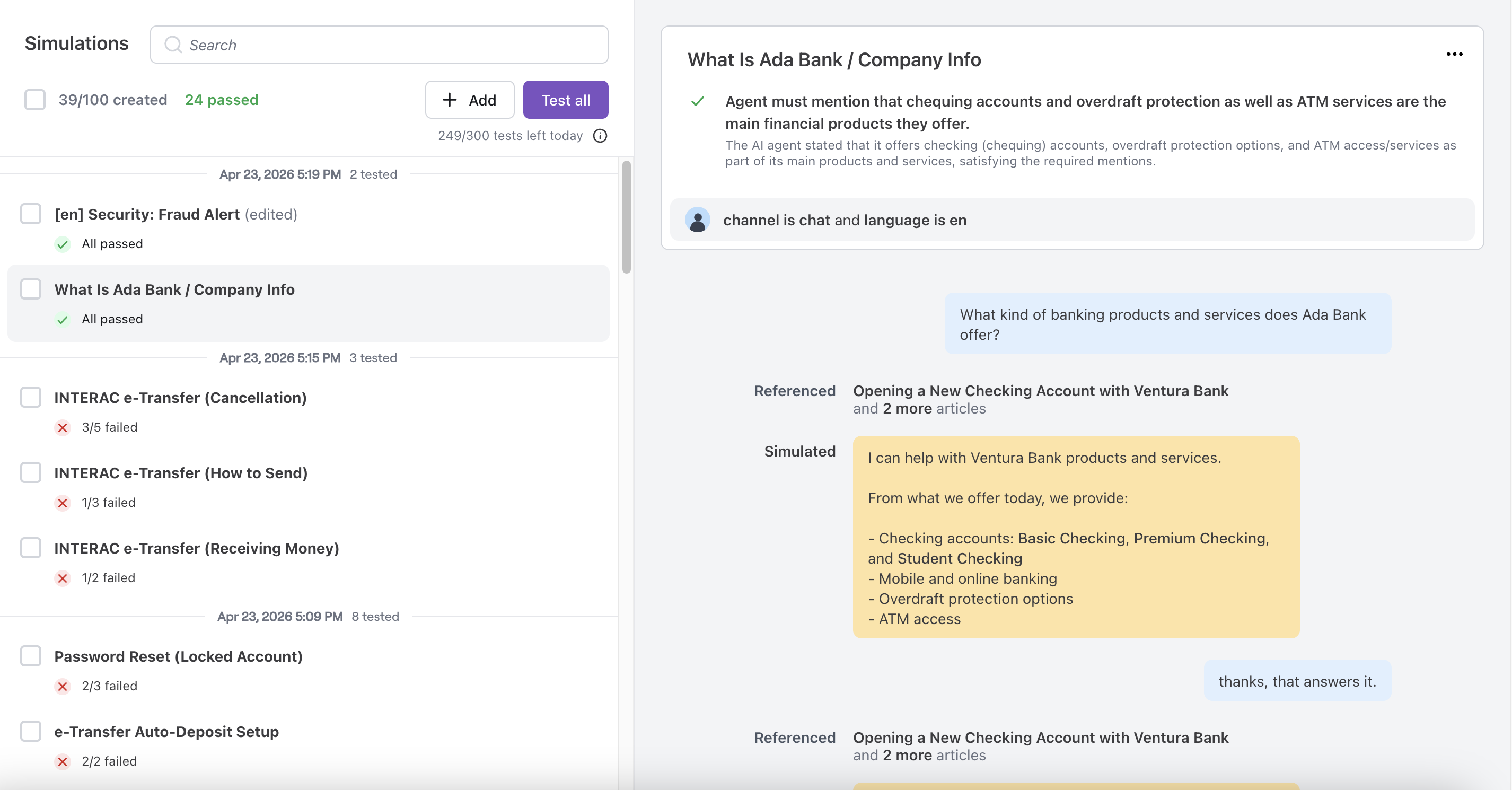

You can run up to 300 test runs per day and maintain up to 100 test cases per instance. If you need additional capacity, contact your Ada representative.

Simulations have the following constraints:

Both simulated responses and evaluations are powered by generative AI. Some minor variability in responses and evaluation results is expected between test runs. To improve consistency, use clear and specific expected outcomes, ensure relevant Coaching and Custom Instructions are in place, and re-run tests periodically to observe trends over time.

Tests run separately from live traffic and do not affect production performance. You can run tests at any time without impacting end-user conversations.

Simulated conversations run as multi-turn exchanges, capped at 40 turns. The simulated end user responds based on the Scenario you define, and the AI Agent uses its full production capabilities. The conversation ends when the Agent resolves the inquiry, reaches a handoff, or hits the 40-turn cap.

Simulations support several common workflows:

Simulations provide automated conversation testing capabilities through structured test cases.

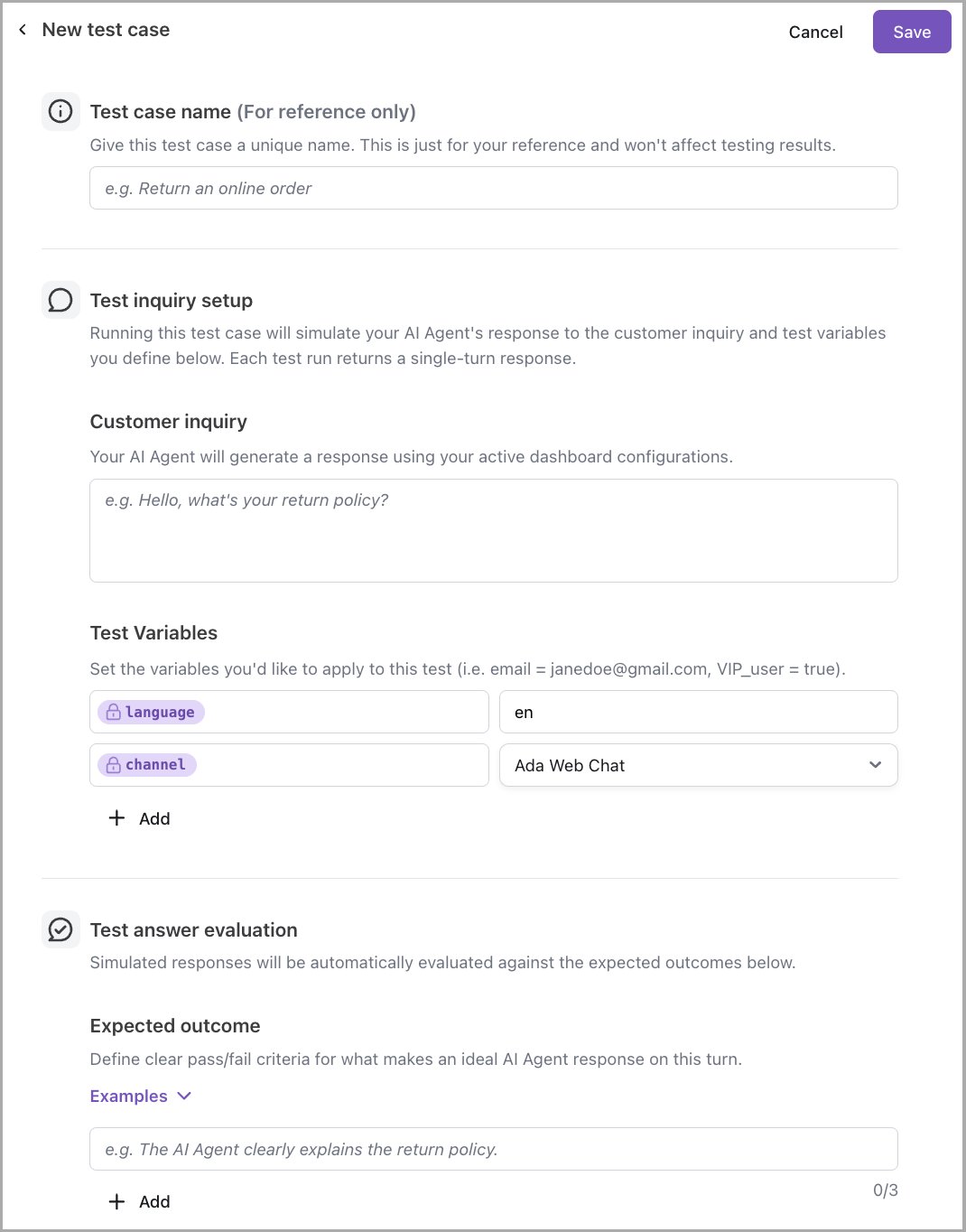

Each test case includes the following elements:

The following examples illustrate how to structure test cases for common scenarios:

Each test run generates pass/fail results for individual expected outcomes and an overall status for the test case. Results also include a rationale explaining each judgment and a list of generative entities (Knowledge, Actions, Playbooks, etc.) used to produce the response. For more details, see Review results.

Get started with Simulations in just a few steps. For detailed instructions, see Implementation & usage.

In your Ada dashboard, navigate to Simulations, and click Add. Enter a Test case name, a Customer inquiry, a Scenario describing the end user’s goal and behavior across turns, select the Language and Channel (Web Chat, Email, or Voice), and define at least one Expected outcome. Then, click Save.

Select your test case and click Test. The AI Agent runs a multi-turn simulation and evaluates the transcript against your expected outcomes.

Create test cases, run tests, and review results to validate your AI Agent’s responses.

Test cases define the end-user inquiry and expected outcome that the AI Agent’s response is evaluated against. Each test case captures a specific scenario you want to validate, making it reusable for regression testing and ongoing verification.

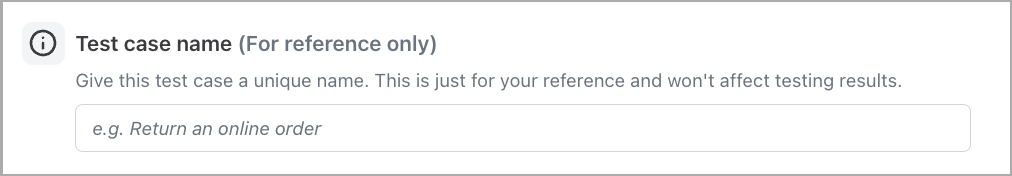

The Test case name should be descriptive and clearly reflect the scenario being tested—for example, Refund policy accuracy or Password reset initiation. A clear name makes it easier to identify test cases when running batches or reviewing results.

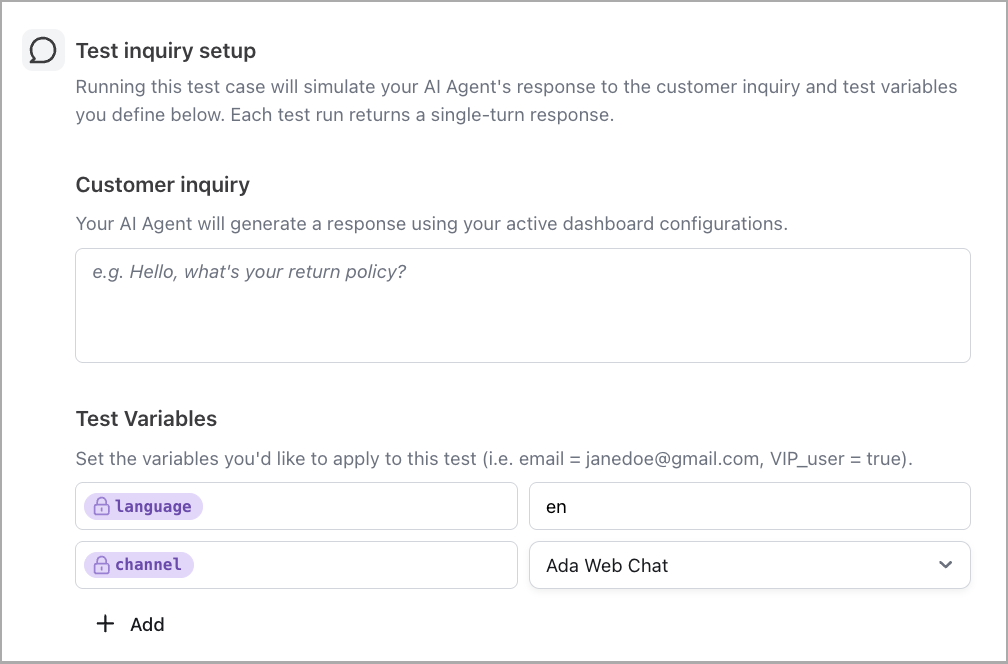

Test inquiry setup defines what the AI Agent receives and the context in which it responds.

Customer inquiry: The message the AI Agent receives, simulating what an end user would send. This should reflect realistic phrasing and context.

Test Variables: Variables allow test cases to simulate specific end-user contexts, such as language preferences or channel type. Adjusting values like language and channel helps ensure the AI Agent’s response reflects real-world conditions.

The Scenario describes the simulated end user’s goal, context, and how they should respond across turns. It drives the simulated end user’s behavior throughout the multi-turn conversation, so realistic scenarios produce more representative results.

A well-written Scenario:

For detailed guidance, see Scenarios in the best practices guide.

Test cases created before multi-turn Simulations launched remain runnable. Their existing Customer inquiry is reused as the Scenario for simulated runs.

The next time you edit a pre-existing test case, you are required to add a Scenario before saving.

To populate Scenarios for existing test cases in bulk, use the MCP Server rather than editing each test case individually.

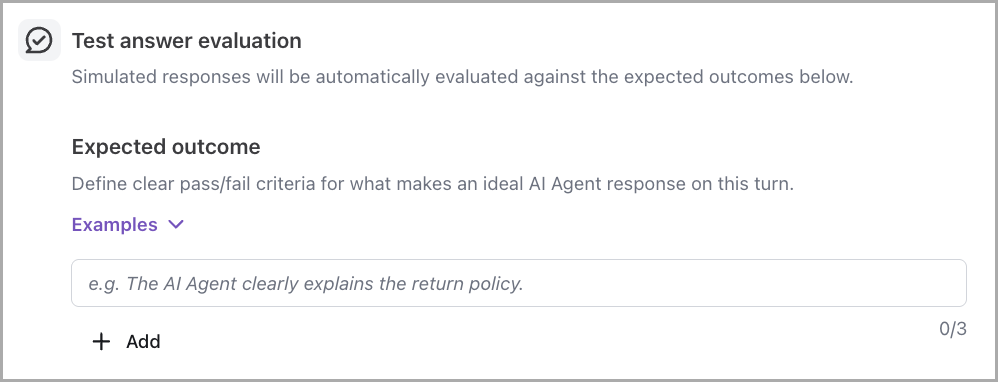

Test answer evaluation defines what the AI Agent’s response must achieve to pass. The AI Agent’s response is evaluated against each criterion independently, producing:

A pass/fail result per criterion

An overall pass/fail for the test case

A rationale explaining each judgment

Expected outcomes: Each test case requires at least one expected outcome and supports up to ten. Write outcomes that are specific and measurable—for example, instead of responds helpfully, use provides the return policy timeframe or includes a link to a help article. Clear, well-defined outcomes produce more reliable pass/fail evaluations and meaningful rationale.

You can add test cases from the Simulations page in your Ada dashboard.

To create a test case:

You can modify or remove existing test cases from the Simulations page.

To edit a test case:

To delete a test case:

Test runs execute one or more test cases against the current published AI Agent configuration. Each test simulates a multi-turn conversation—up to 40 turns—between the simulated end user and the AI Agent, then evaluates the transcript against the defined expected outcomes.

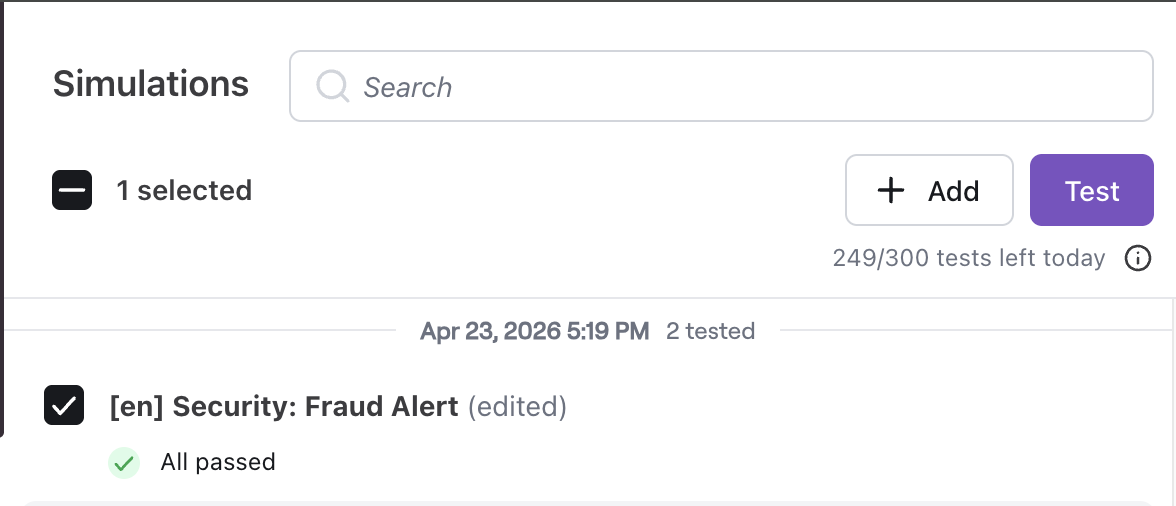

Tests can be run individually to validate specific scenarios, or in batches to evaluate broader coverage. Batch testing is useful for regression testing after configuration changes or for validating deployment readiness across multiple scenarios at once.

Selecting multiple test cases and running them together produces a consolidated test run with results for each case.

Test runs execute separately from live traffic and do not affect production performance.

To run tests:

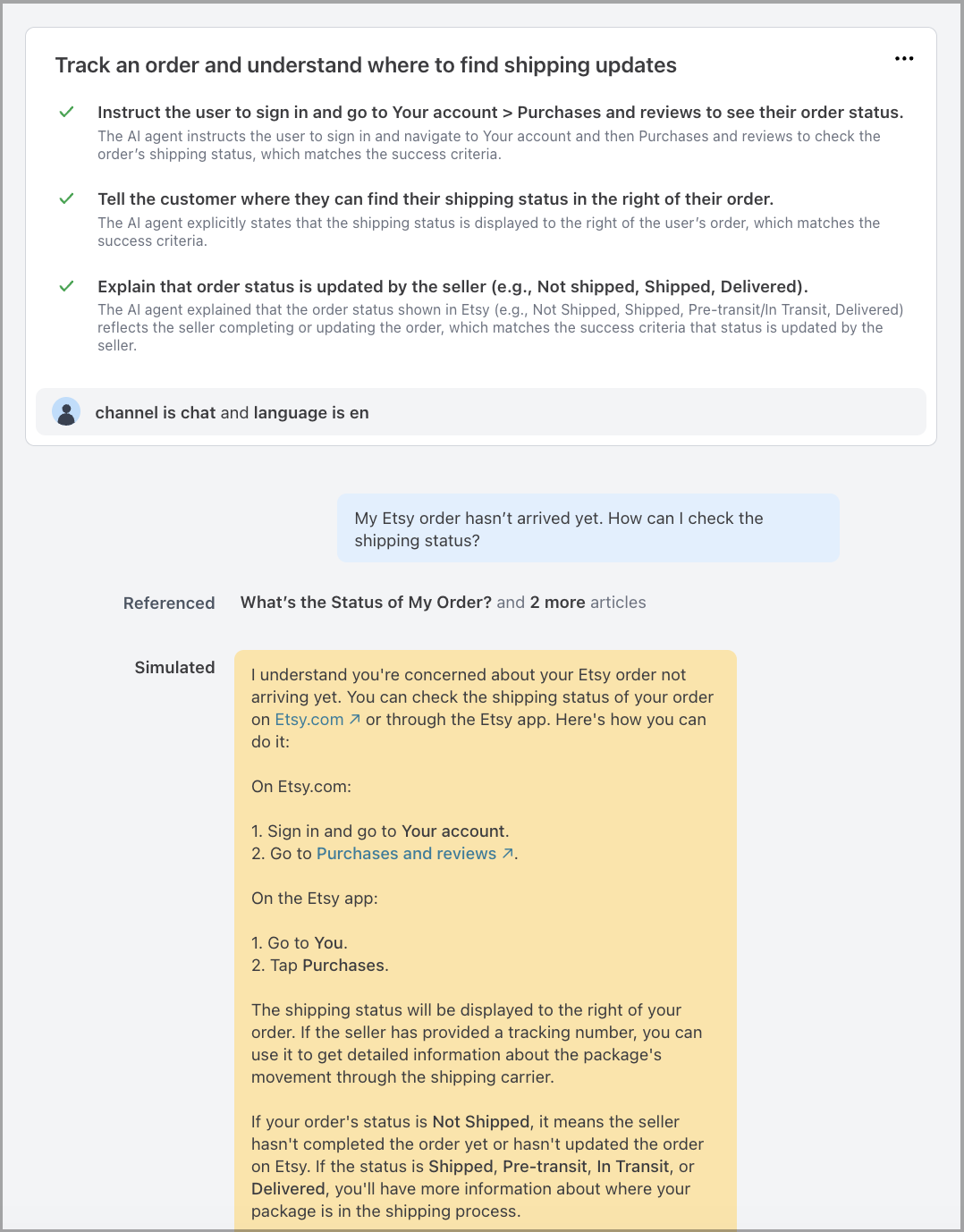

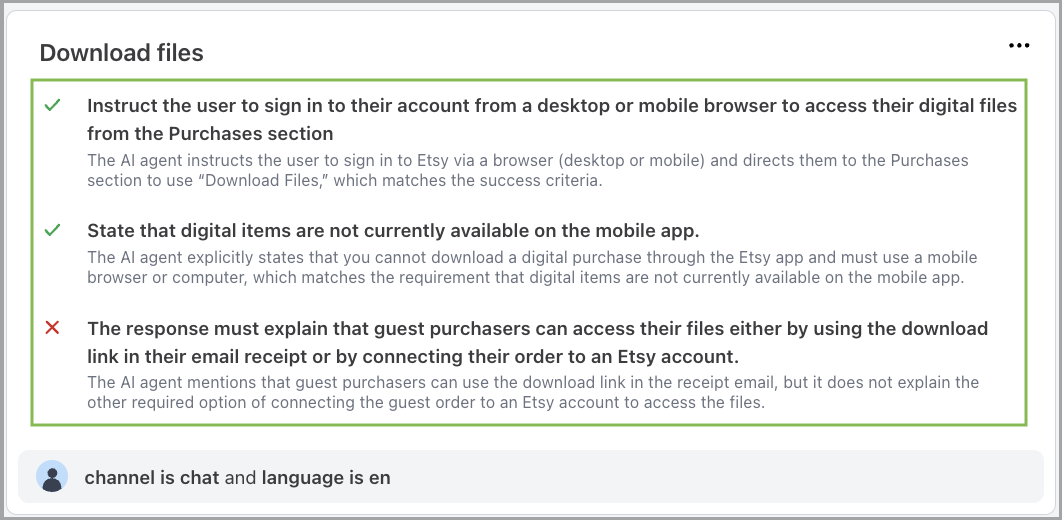

Test results provide visibility into how the AI Agent performed against each expected outcome. Results include pass/fail status, evaluation rationale, and details about which tools were referenced by the AI Agent to generate its response.

Each test case displays an overall pass/fail status based on whether the AI Agent’s response met all defined expected outcomes. Individual criterion results are also available, allowing you to identify which specific expectations passed or failed.

Clicking into a test case reveals additional context:

Conversation transcript: The full multi-turn exchange between the simulated end user and the AI Agent, including every message from both sides.

Audio playback (Voice channel only): A simulated audio recording of the AI Agent’s and simulated end user’s turns. The AI Agent’s responses are generated using the configured speaking voice for the selected language, while the simulated end user uses one of four default voices.

Evaluation rationale: An explanation for each criterion judgment, describing why the response passed or failed.

Generative entities used: A list of Knowledge, Actions, Playbooks, and other configuration elements that contributed to the response.

To review test results:

Failed test cases highlight areas where the AI Agent’s behavior does not meet expectations. The results provide the context needed to diagnose issues and make targeted improvements.

Each test result includes an evaluation rationale that explains why each criterion passed or failed. The rationale provides insight into the AI Agent’s reasoning and helps identify whether the issue stems from missing Knowledge, incorrect Action behavior, Playbook logic, or other configuration.

Test results include direct links to the generative entities—such as Knowledge articles, Actions, or Playbooks—that contributed to the response. These links provide quick access to the relevant configuration, making it easier to locate and update the source of an issue.

When a test case fails because of how the AI Agent responded, you can apply Coaching directly from the simulated conversation without leaving the Simulations workflow.

The coaching button appears on applicable AI Agent messages in the simulated conversation, the same way it appears in the Conversations view. Coaching applied here:

To apply Coaching from a simulated conversation:

For details on writing effective coaching, see Coaching best practices.

Re-running test cases after making changes confirms whether updates resolved the issue. This cycle of testing, diagnosing, and improving supports continuous refinement of AI Agent behavior over time.

These features complement Simulations and support AI Agent optimization:

Interactive Testing: Test your AI Agent in real time by chatting with it directly, simulating different user types with variables.

MCP Server: Retrieve test cases, test run results, and quota information, or export test data as CSV through a connected AI assistant.