Improving your AI Agent starts with understanding how end users interact with it. Under Analytics, the Topics tab is especially valuable because it highlights where conversations cluster, how automation is performing, and where satisfaction may be slipping. Combined with other reporting tools, it gives AI Managers a clear path to identify gaps and prioritize improvements.

Topic titles and descriptions

Follow these recommendations to create effective titles and descriptions that help your AI Agent accurately assign conversations to the right Topics.

General guidelines

- Be specific: Use clear and specific language in your topic and category descriptions. This helps the AI understand the intent behind end user inquiries better.

- Include key phrases: Incorporate key phrases or triggers that end users might use when asking questions related to the topic. For example, for a topic on password resets, include phrases like “forgot my password” or “reset password”.

- Sample questions: Provide sample questions that end users might ask. This can guide the AI in recognizing similar inquiries.

- Macro to micro approach: Start with broader topics (macro) and then break them down into more specific sub-topics (micro) as needed. This hierarchical structure can help in organizing topics effectively.

Length and detail

- Description length: Aim for a concise description (limit of 5,000 characters).

- Use of examples: Including examples in your descriptions can enhance the AI’s ability to match conversations to the correct topics.

Categories

- Nesting Topics: When defining categories, consider how topics will nest within them. Ensure that category descriptions are detailed enough to guide the system in matching conversations to the appropriate topics.

- Product names: If you have multiple products under a category, it may be beneficial to list product names in both the category and topic descriptions to improve matching accuracy.

Continuous improvement

- Monitor and adjust: Regularly review the performance of your topics and adjust descriptions based on end user interactions and feedback. If certain conversations are not being matched correctly, consider refining the topic descriptions to improve AI recognition.

When you edit a topic’s name or description, your AI Agent immediately starts using the updated information for new conversation assignments. Existing conversation assignments are not changed.

Topic analysis examples

These examples can help you improve your AI Agent by revealing patterns in end user interactions. AI Managers can:

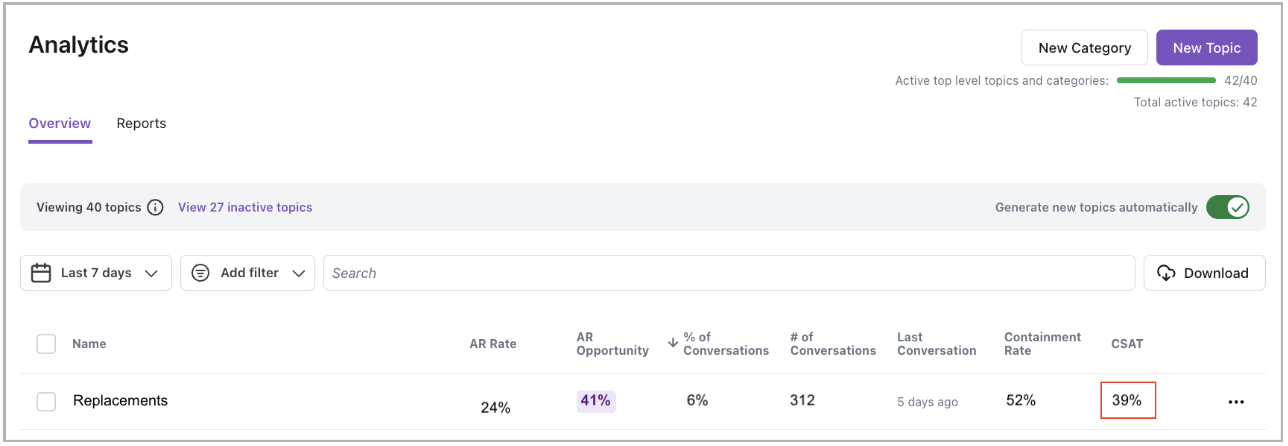

- Start with the Topics tab to review key metrics like Conversation volume, AR Opportunity, and CSAT rate.

- Use overall CSAT scores as another reference point — if CSAT is dropping, check the CSAT rate on the Topics tab.

- Drill into related Conversations to find the issues behind poor CSAT and uncover automation gaps.

Example: Refund requests

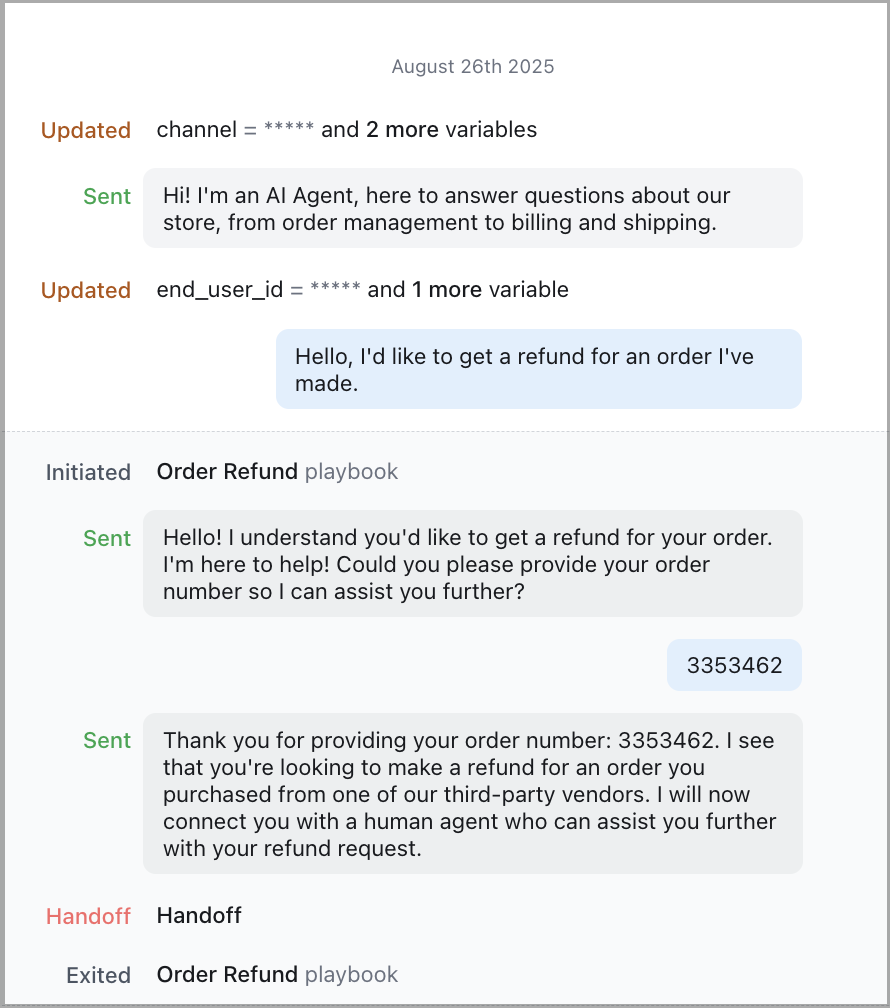

Use this scenario when a Topic shows high volume or a high AR Opportunity—especially for underserved user segments. In this case, a Topic analysis reveals that student users often ask for refunds but rarely receive helpful responses from the AI Agent.

To investigate refund request Topics:

- On the Ada dashboard, go to Analytics.

- On the Topics tab, look for a Refund requests Topic with high Conversation volume and a high AR Opportunity rate.

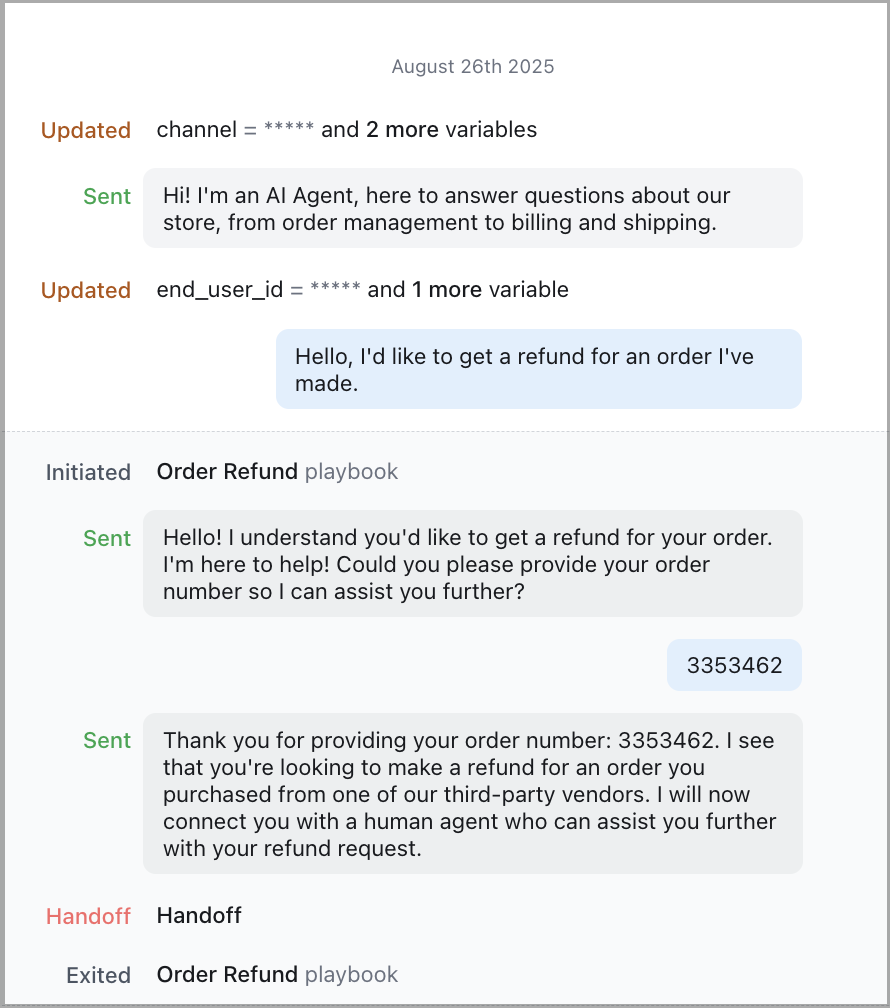

- Drill into the Topic and review related Conversations. You notice student users frequently ask how to get a refund for purchases made through third-party vendors.

- The Agent responds with a generic message or hands off the conversation because no relevant Knowledge article exists.

The Agent uses the same language for all users, even though student users may be less familiar with refund policies or know what to expect from support.

These Conversations often end in unnecessary Handoffs that could be avoided with better guidance.

Next steps:

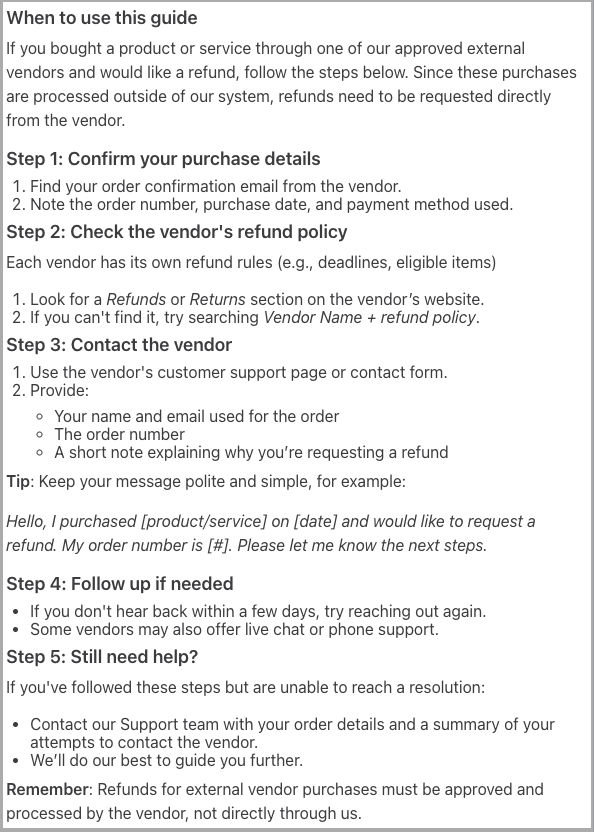

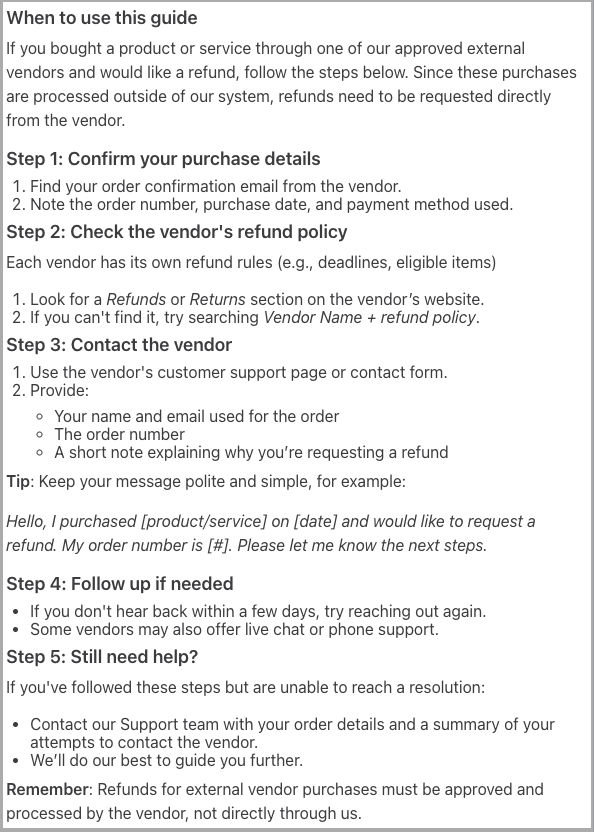

- Add a Knowledge article tailored to student users that explains how to request a refund from an external vendor. For example:

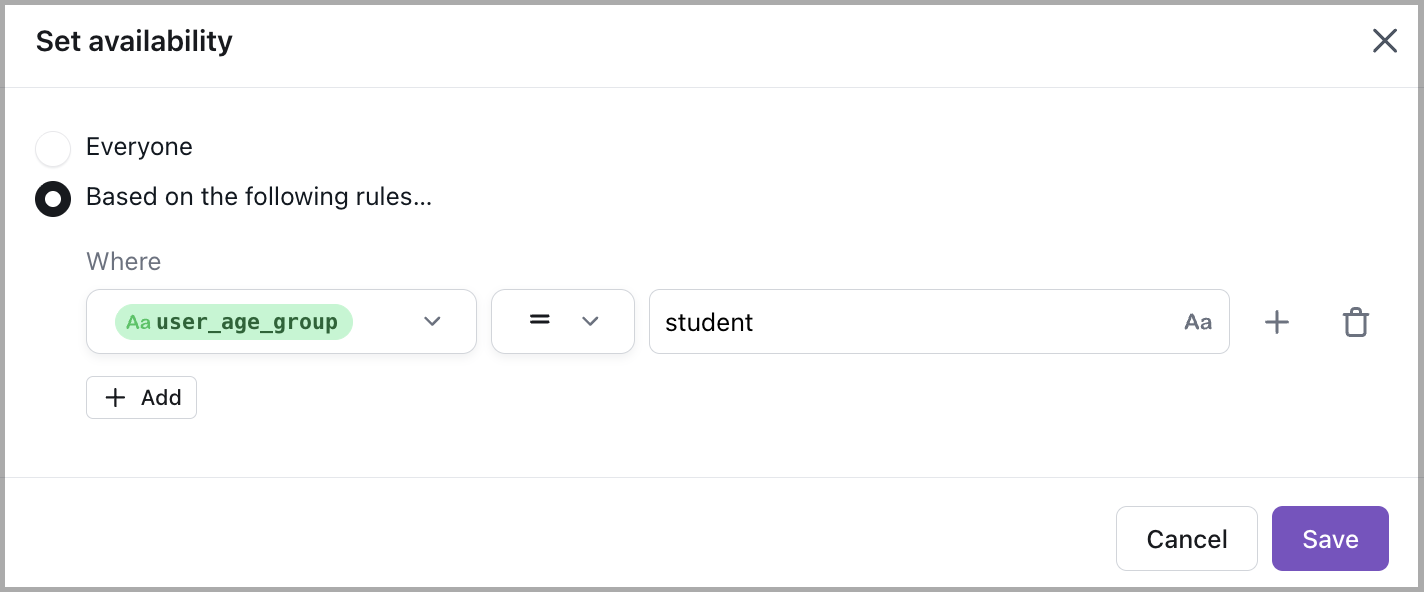

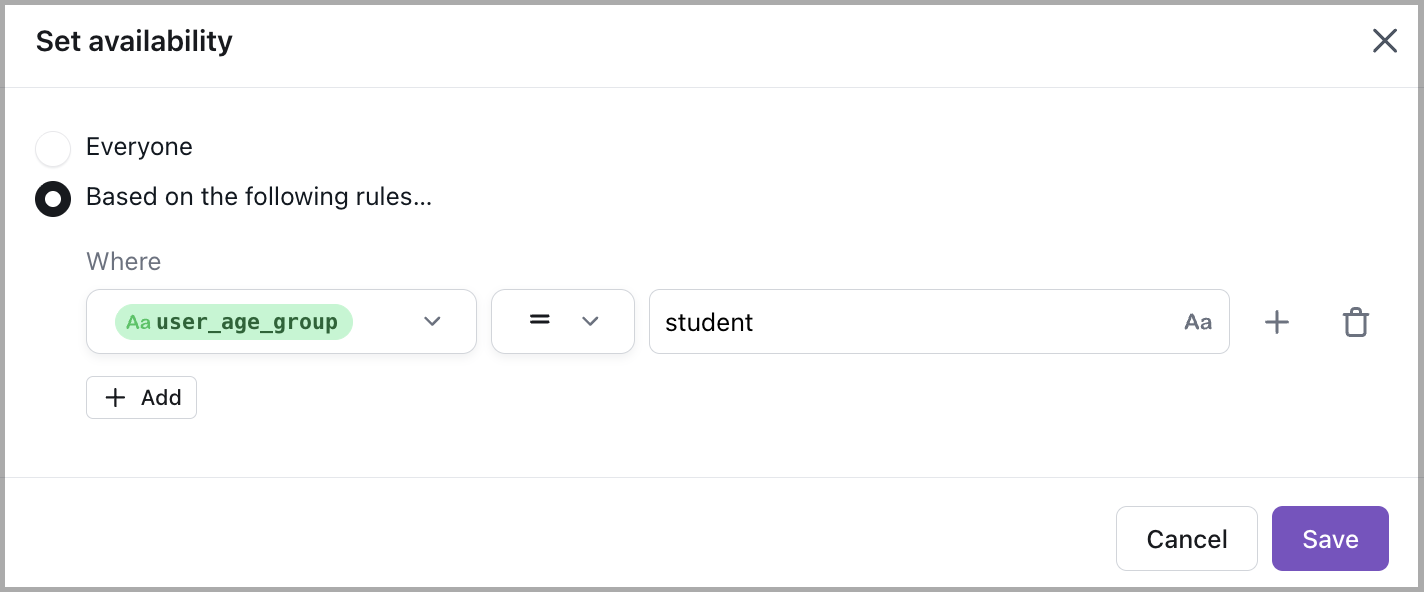

- Apply Personalization rules to adjust tone and messaging for student accounts—creating a more relevant, accessible experience that supports self-serve resolution. For example:

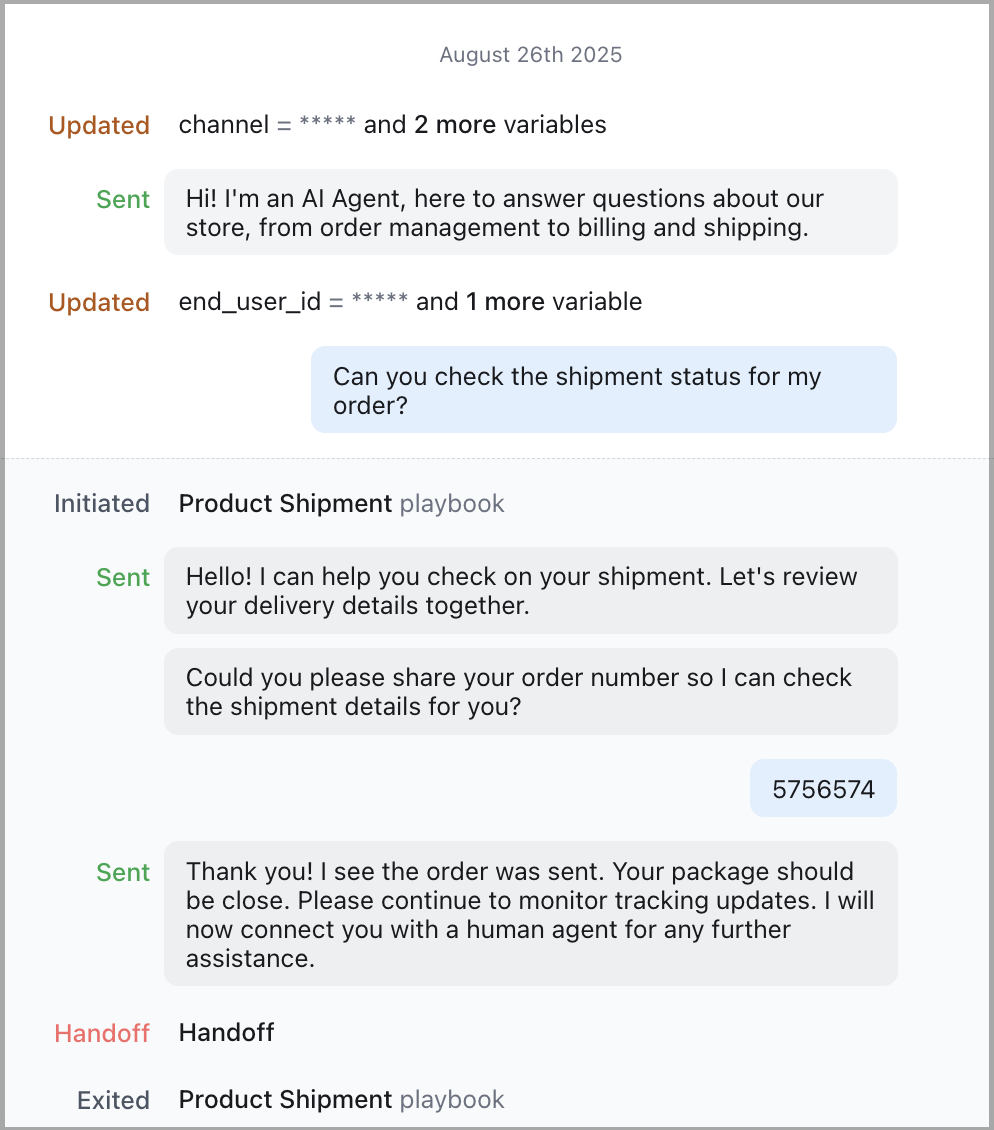

Example: Card fulfillment

Use this scenario when a Topic shows moderate automation but a high AR Opportunity—suggesting the AI Agent is initiating the right flow but consistently handing off where automation could continue.

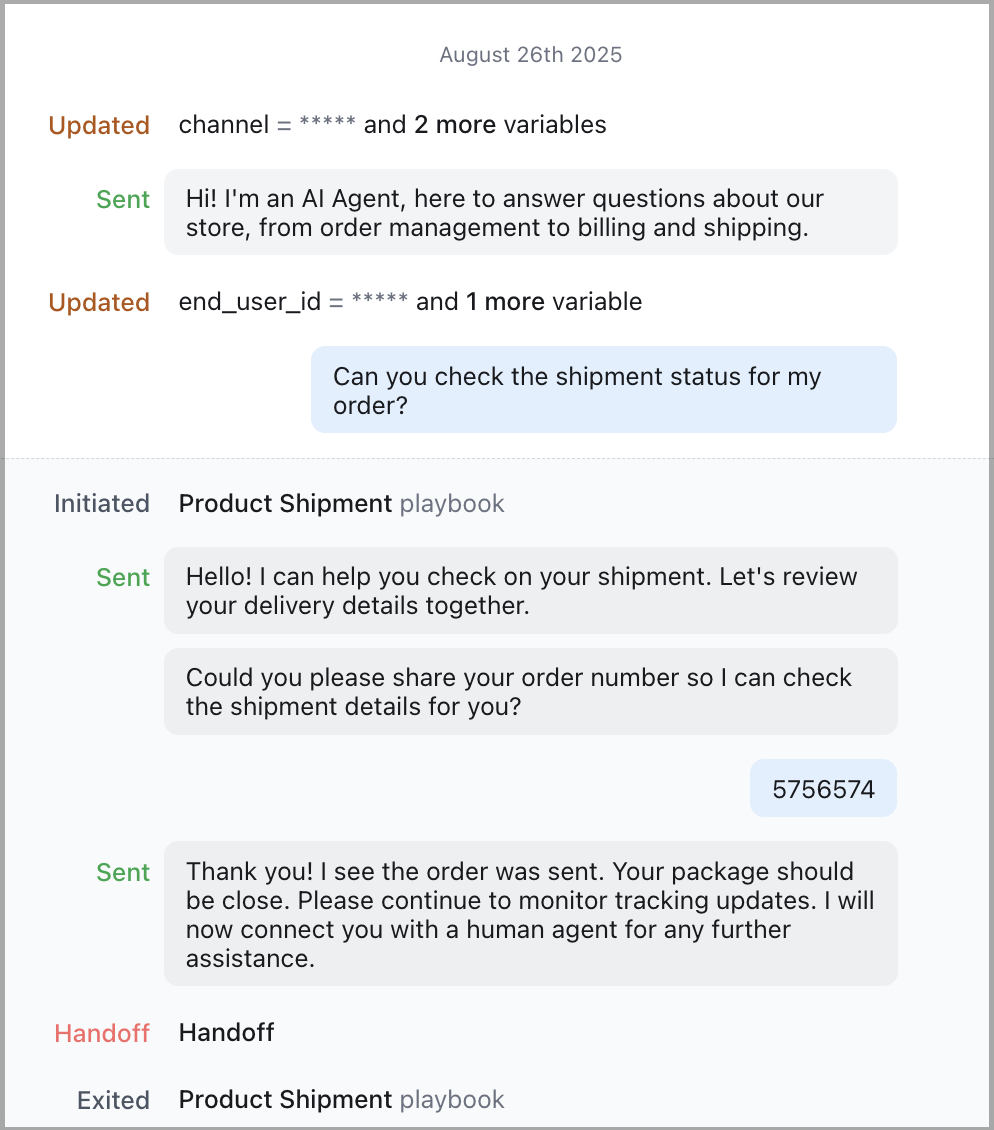

To investigate card fulfillment Topics:

- On the Ada dashboard, go to Analytics.

- On the Topics tab, identify a Card fulfillment Topic with high AR Opportunity rate.

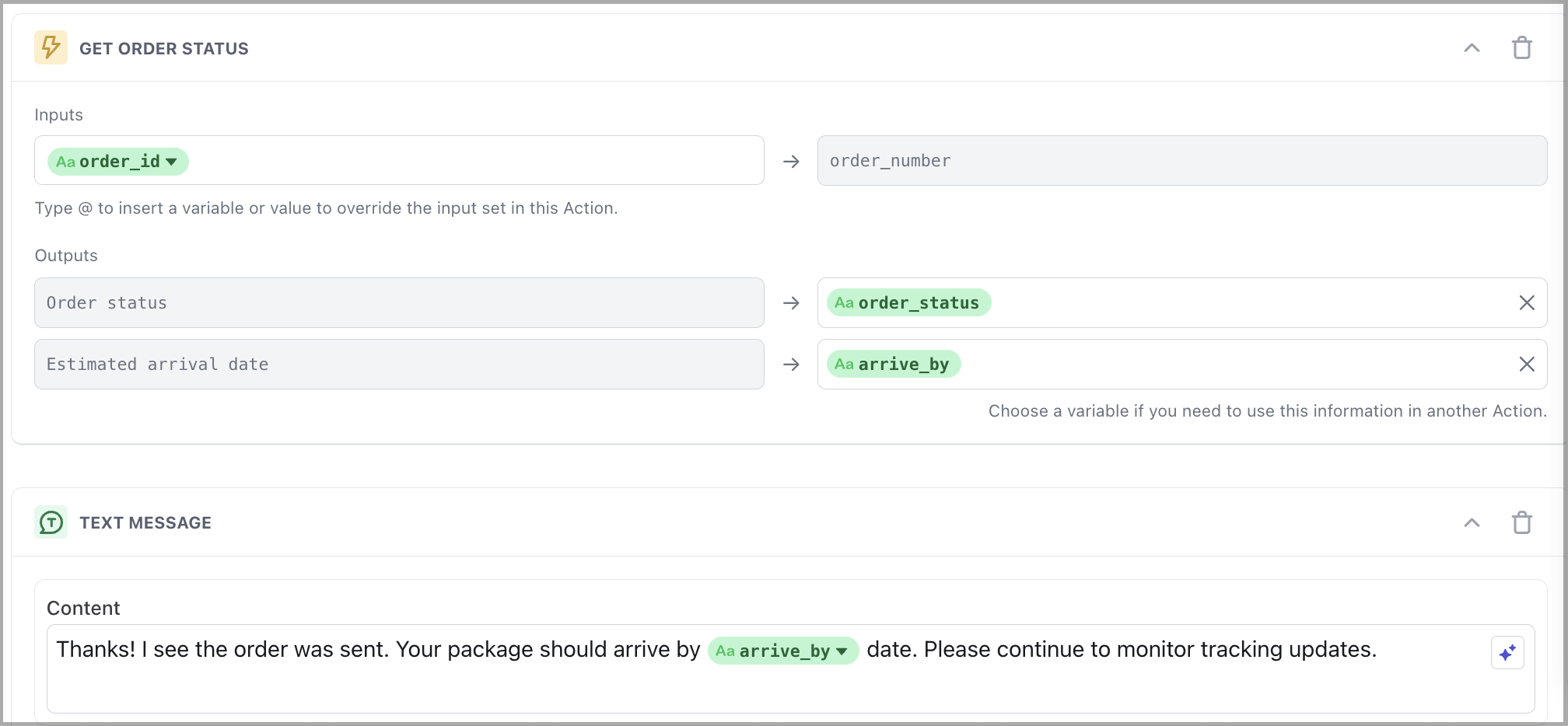

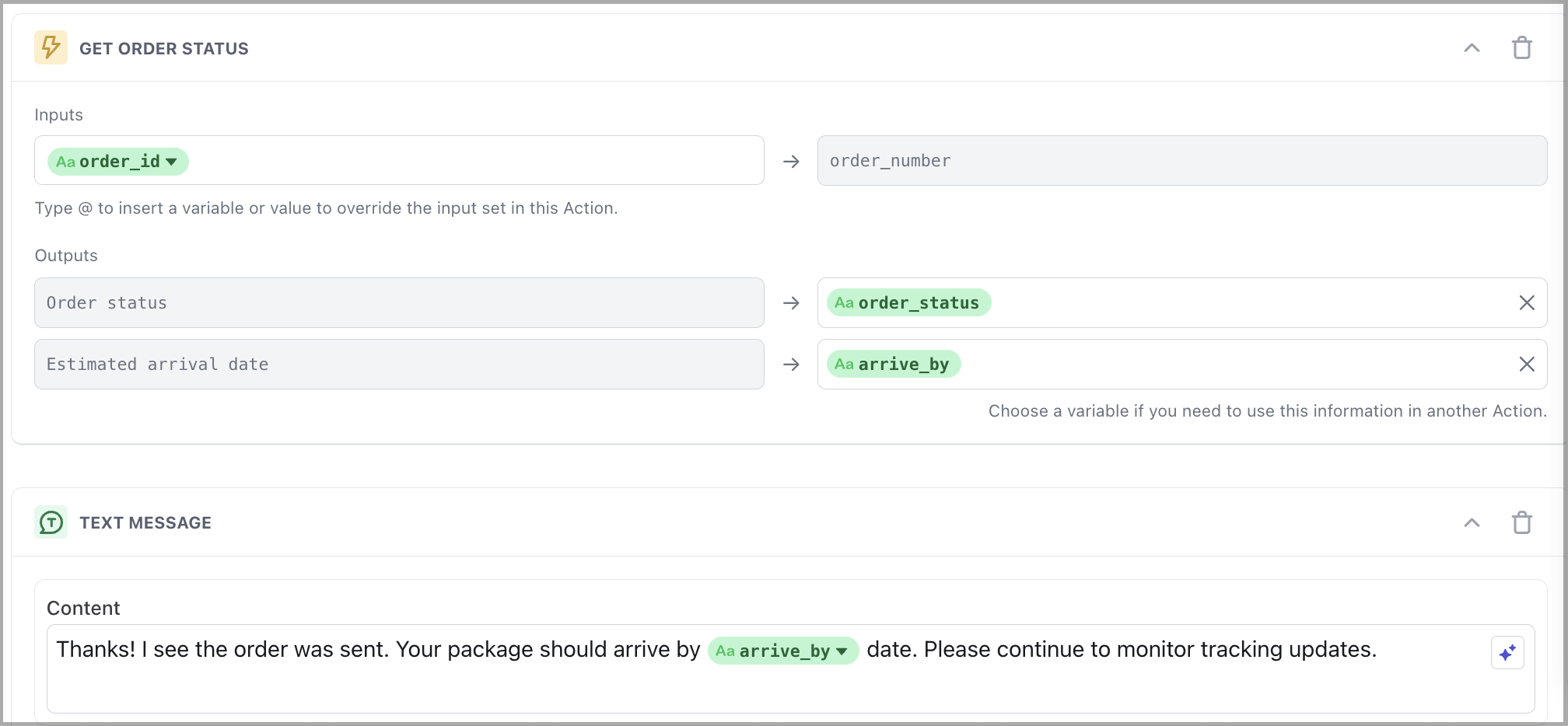

- Drill into the Topic and review Conversations. You find a recurring pattern: the Agent checks shipment details, confirms the delivery address, and provides messaging based on how many days have passed.

- However, if the end user needs to update their address or request a new card, the Agent always hands off—even though this could be automated.

Next steps:

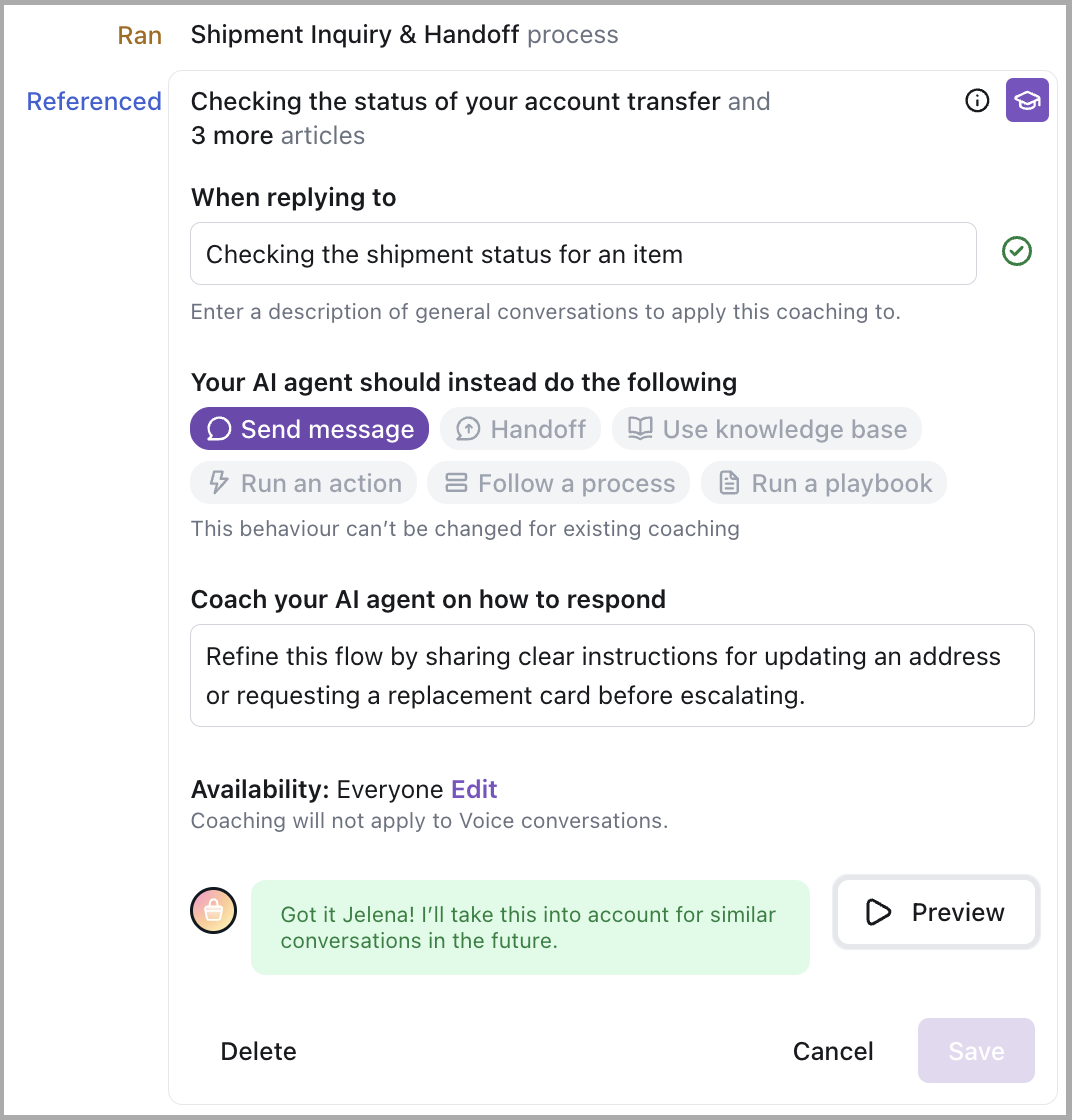

-

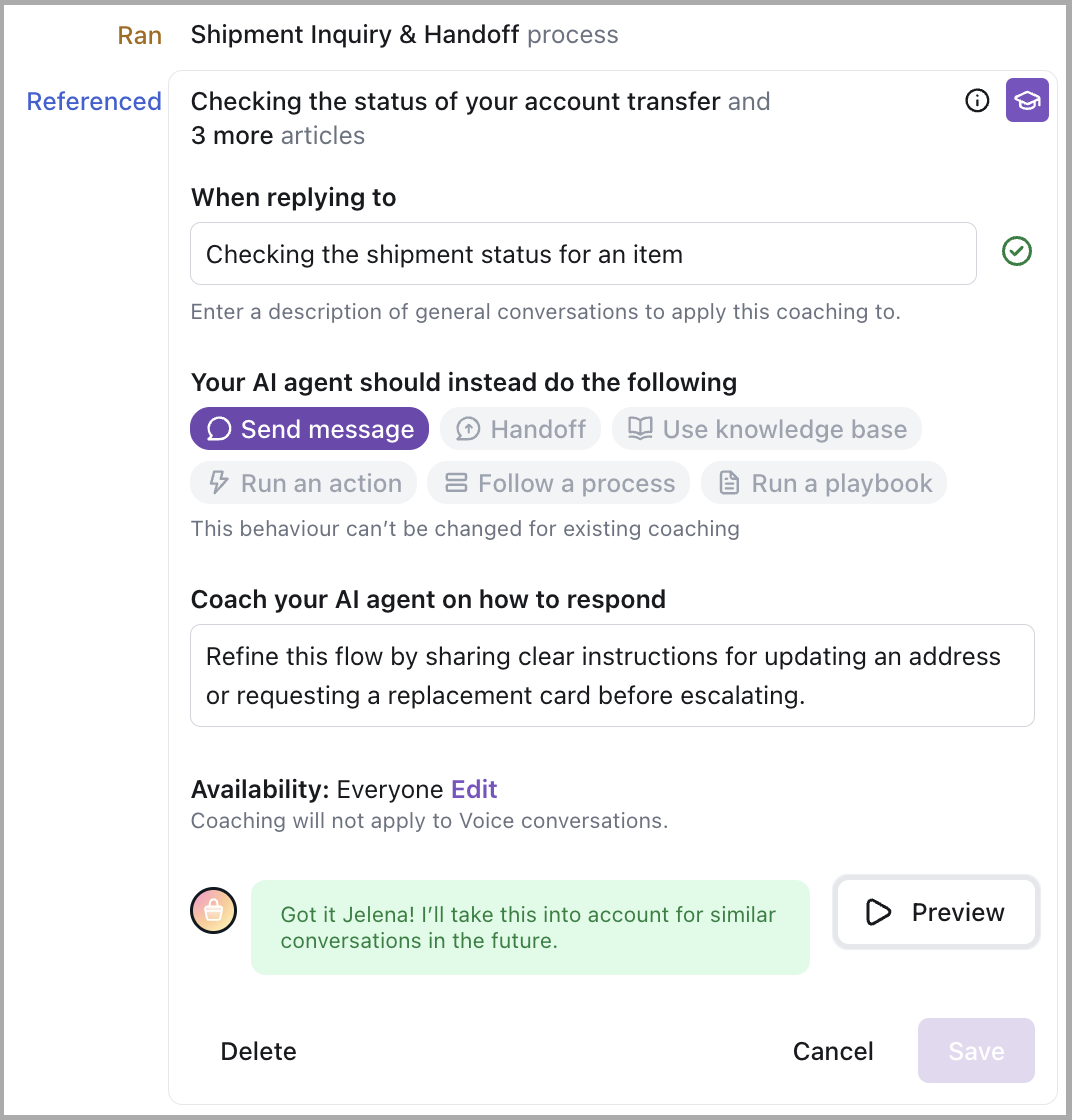

Add Coaching to refine the Agent’s messaging and ensure the end user receives clear, actionable next steps.

-

Build an Action to automate common fulfillment requests like reshipments or address changes—reducing premature handoffs and improving resolution rates.

Example: Account cancellation

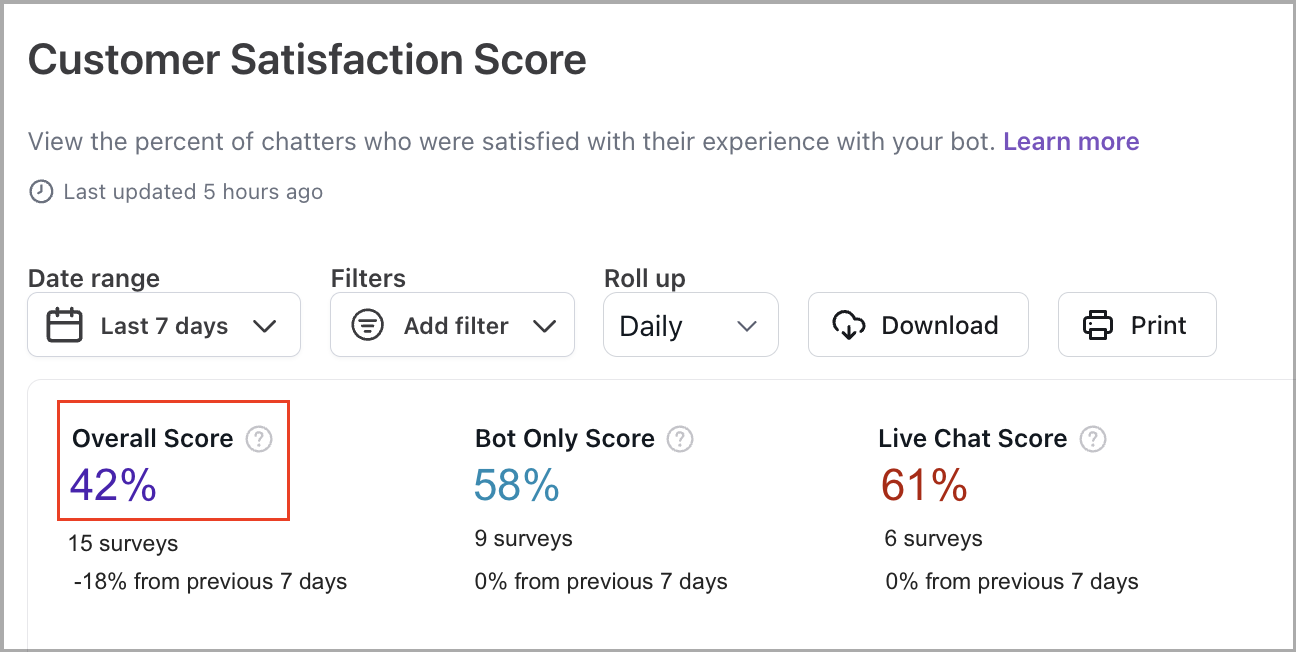

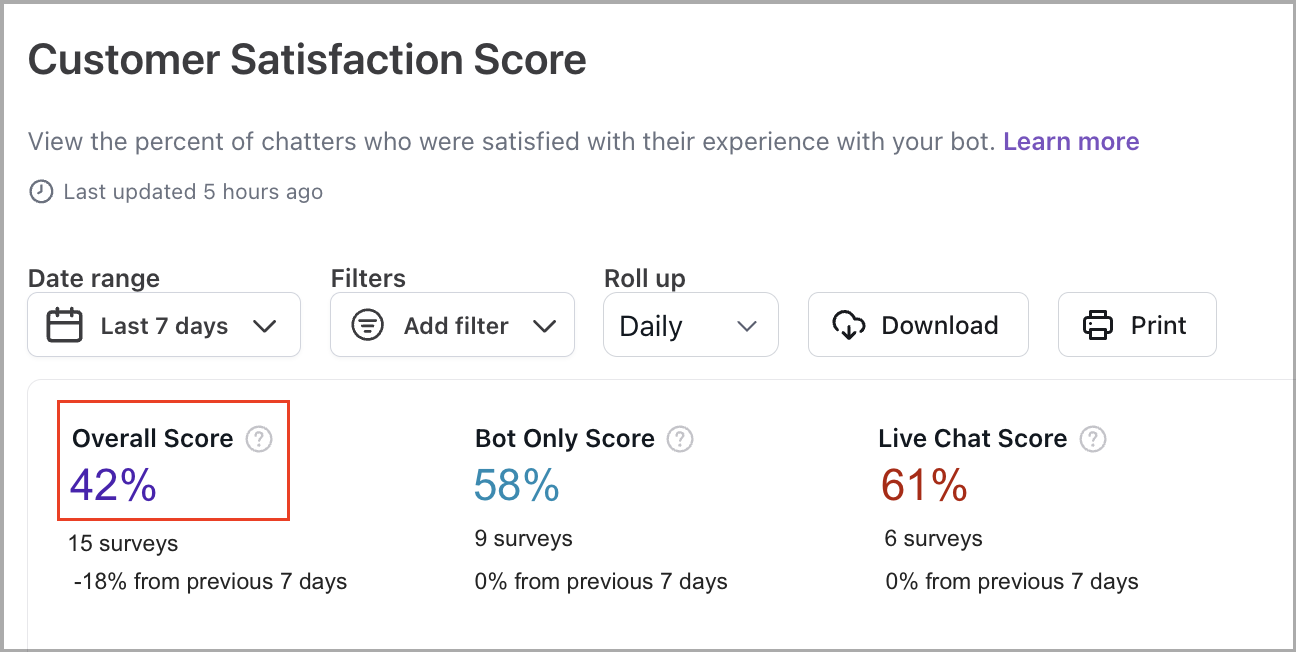

In the Customer Satisfaction Score report, you notice a drop in the Overall Score.

To investigate, navigate to the Analytics, and on the Topics tab, sort by CSAT rate—where a Topic related to subscription or account cancellation stands out with consistently low satisfaction. This signals a high-friction experience in a sensitive scenario.

Drilling into the related Conversations reveals a recurring pattern: the AI Agent detects the intent correctly but fails to guide end users through the next steps—resulting in confusion, frustration, and low CSAT.

- An end user asks to cancel their account or subscription.

- The AI Agent detects the intent but responds with a generic message like Let me connect you with support, without acknowledging the request or providing guidance.

- There’s no attempt to explain the cancellation process, gather details, or offer relevant resources.

- As a result, the Conversation is handed off immediately—leaving the end user dissatisfied.

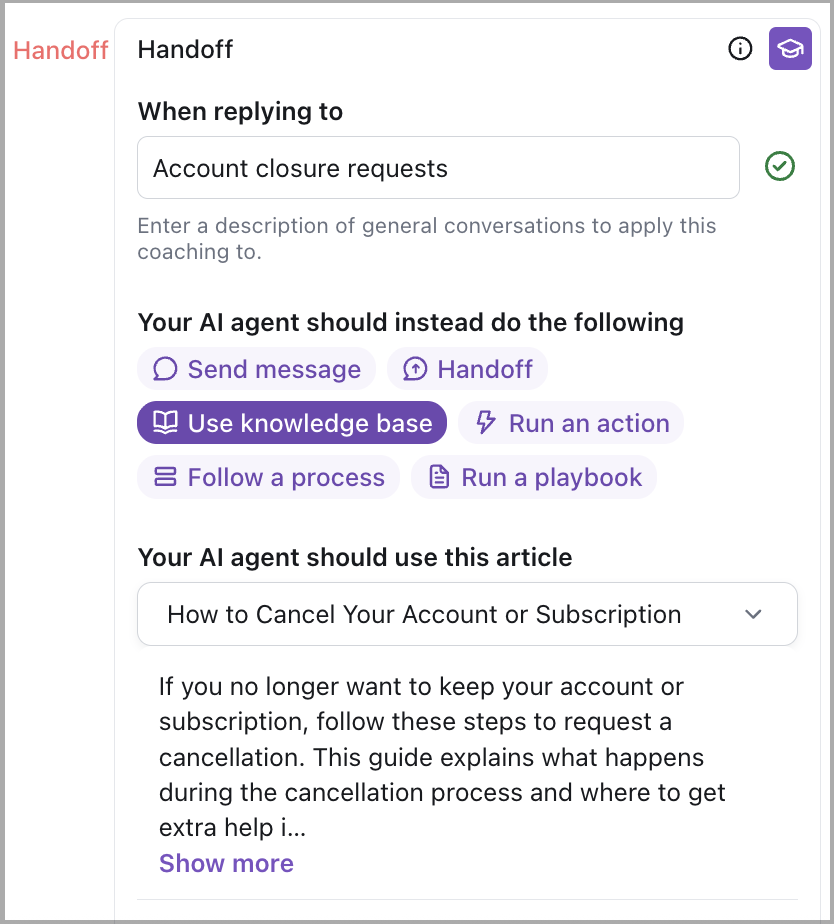

Next steps:

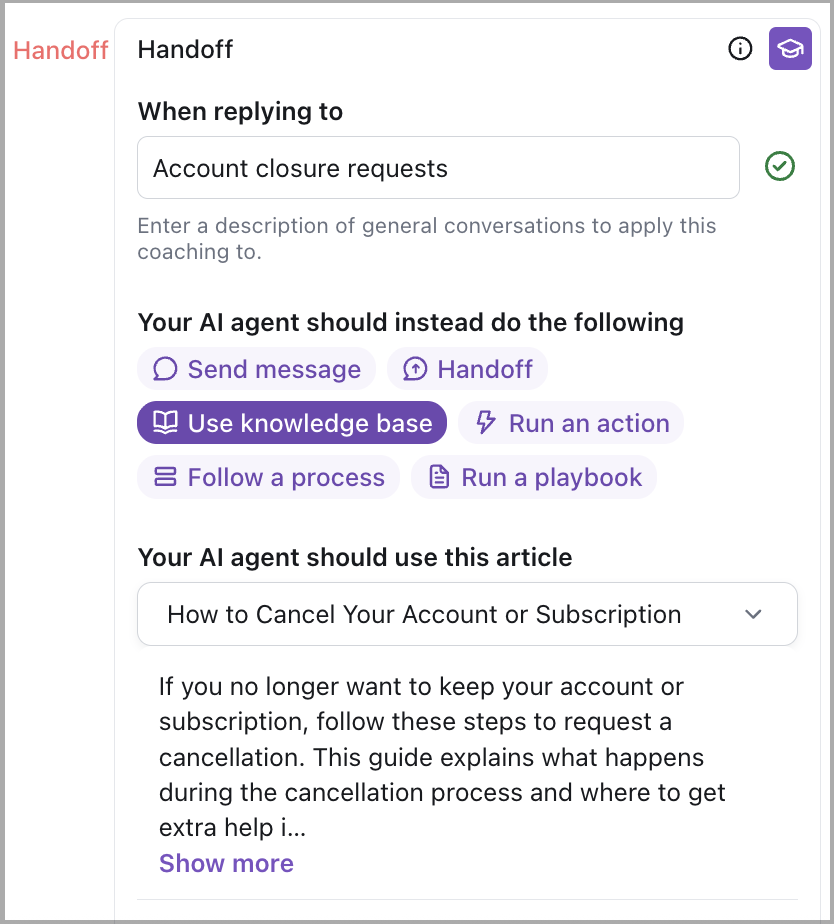

- Create or refine a Knowledge article that outlines the cancellation process in simple, direct language. Include details based on plan types or support policies to improve clarity.

- Use Coaching to train the AI Agent to acknowledge cancellation requests clearly and provide pre-escalation guidance that sets expectations.

These updates will help reduce premature Handoffs, improve CSAT, and ensure end users feel understood and supported at a critical moment.

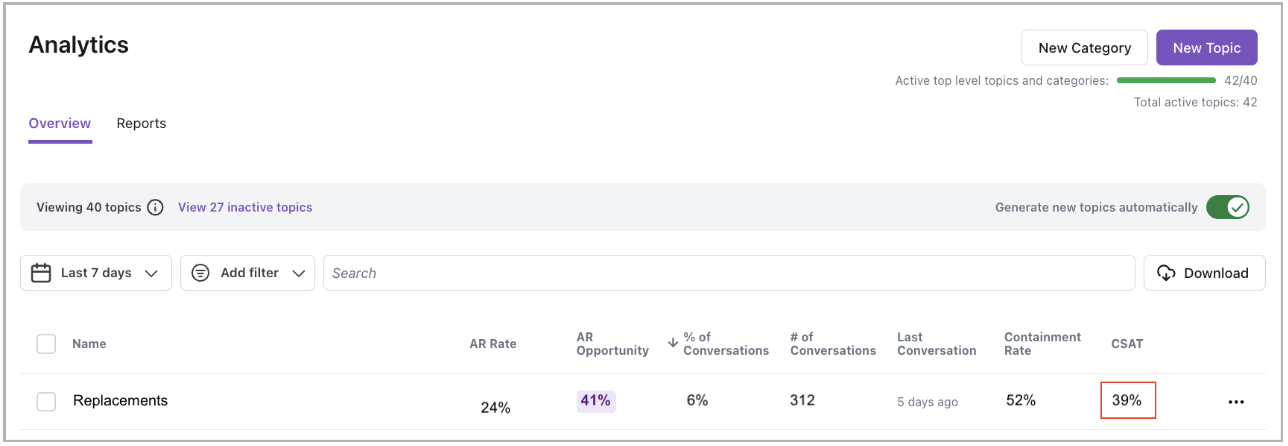

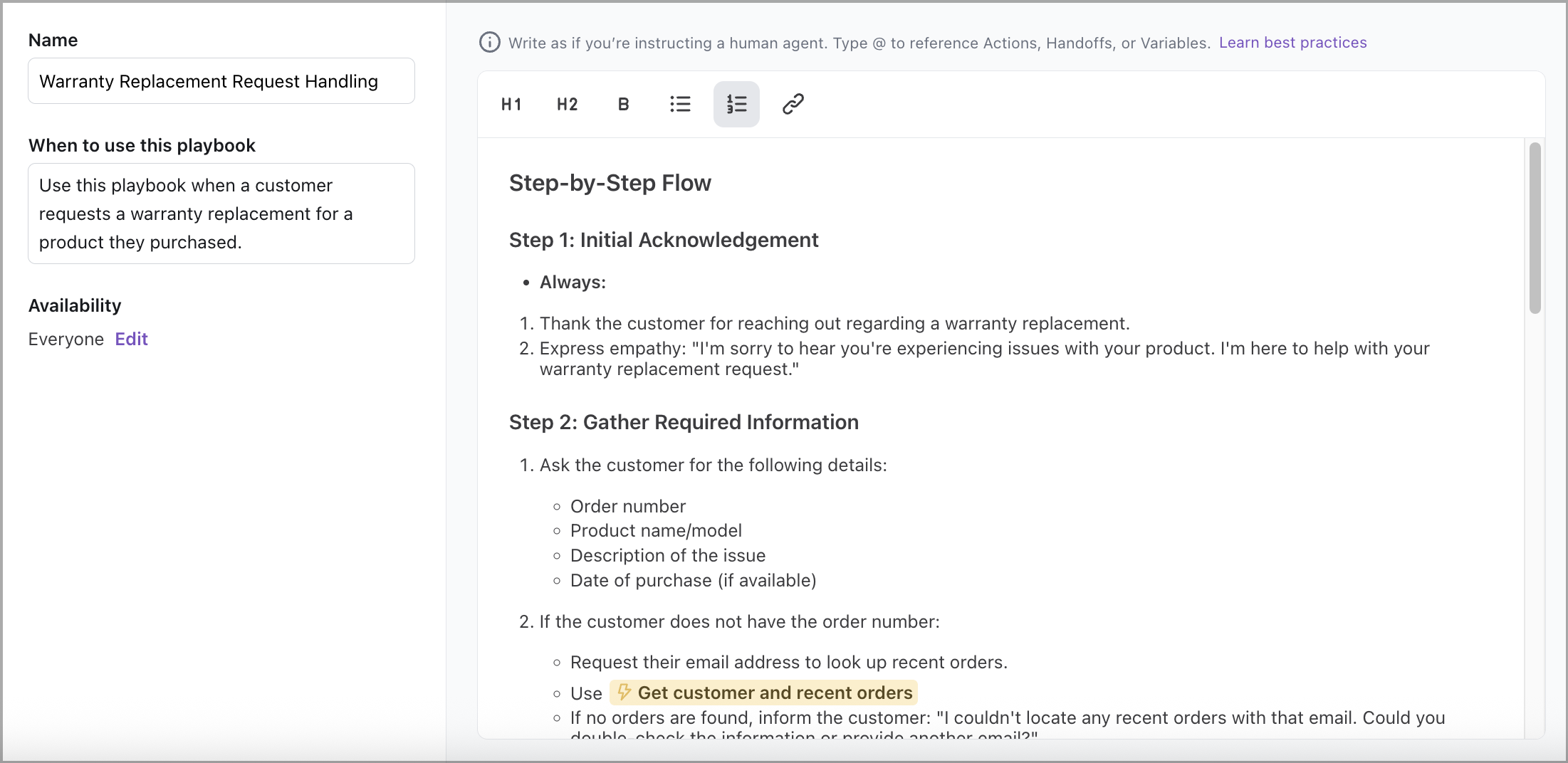

Example: Warranty replacement

Start by reviewing the entries on the Topics tab and sorting by the CSAT rate. You notice that Topics like Replacements or Damaged products are performing poorly, with consistently low satisfaction scores.

Drilling into one of these Topics reveals a common pattern: the AI Agent recognizes the intent but lacks the ability to collect the required details—resulting in early handoffs and a missed opportunity to automate the experience.

- An end user requests a replacement for a damaged product under warranty.

- The Agent acknowledges the request but doesn’t collect critical information like purchase date, product model, or description of the issue.

- Because these inputs are necessary to check eligibility, the Agent hands off the conversation prematurely.

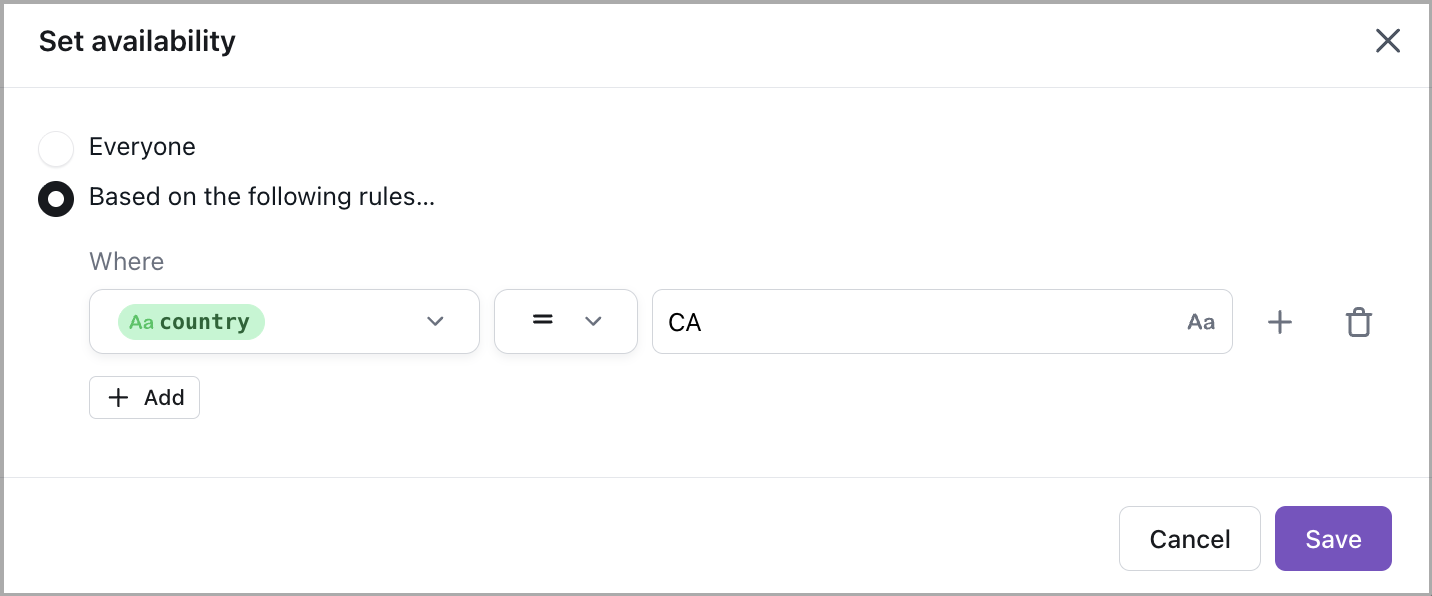

- The Agent also uses the same communication style for all end users, missing the chance to personalize the response based on the end user’s plan (e.g., Premium support) or region.

Next steps:

-

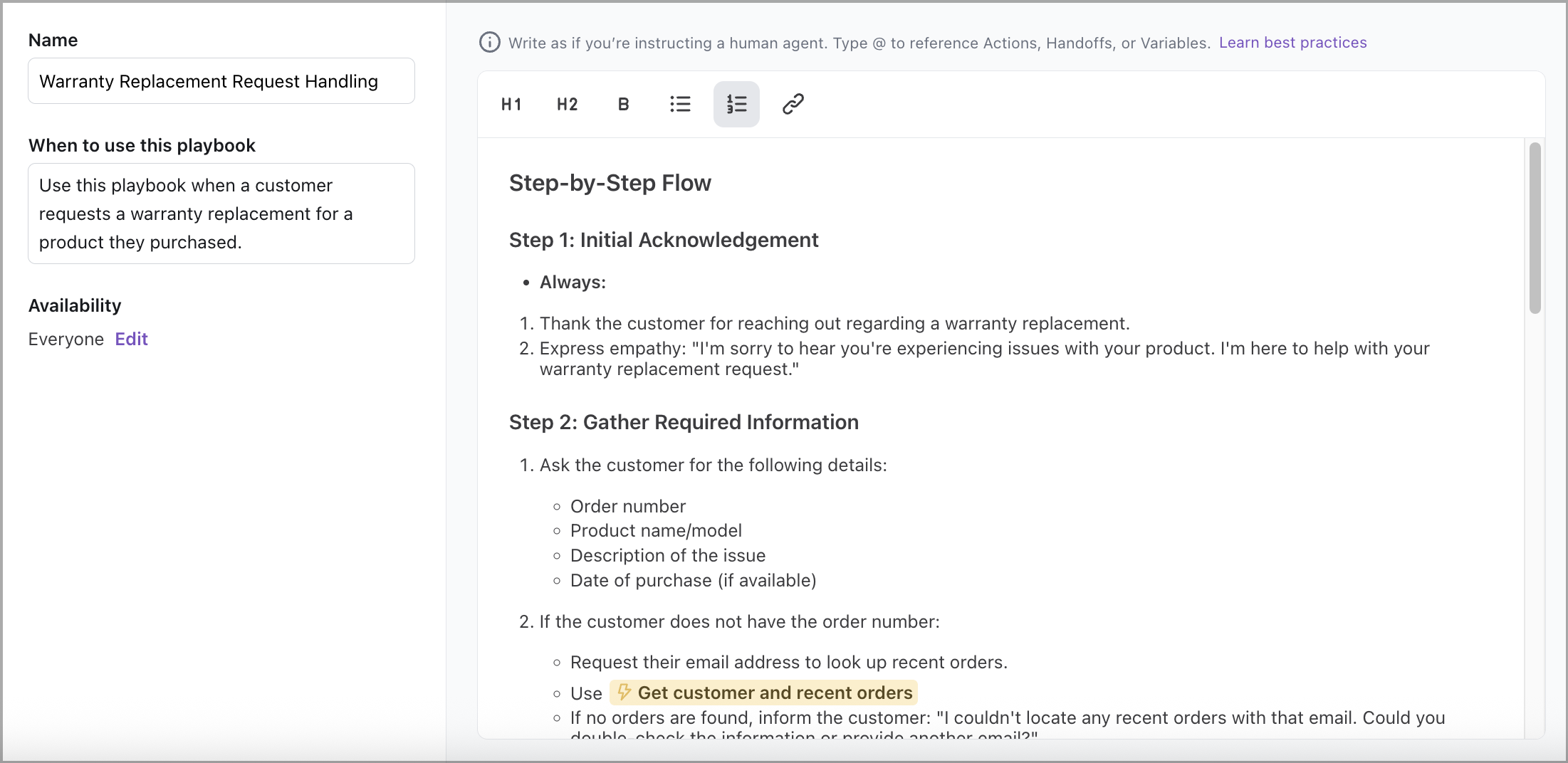

Build a Playbook that gathers all required inputs, checks warranty eligibility through an Action, and either submits the replacement request or provides clear next steps if ineligible.

-

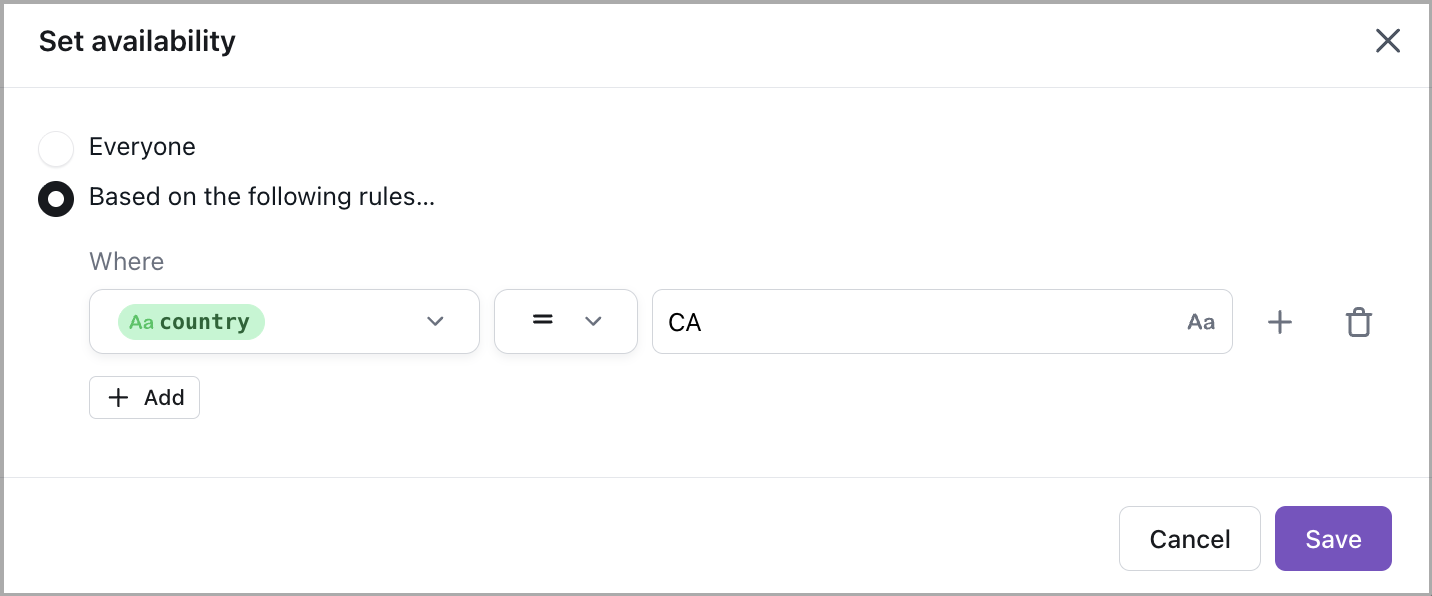

Apply Personalization rules to tailor messaging by user segment—such as using elevated tone for premium members or adding shipping timelines based on region.

These improvements reduce Handoffs, streamline the replacement workflow, and create a more supportive, tailored experience for end users.